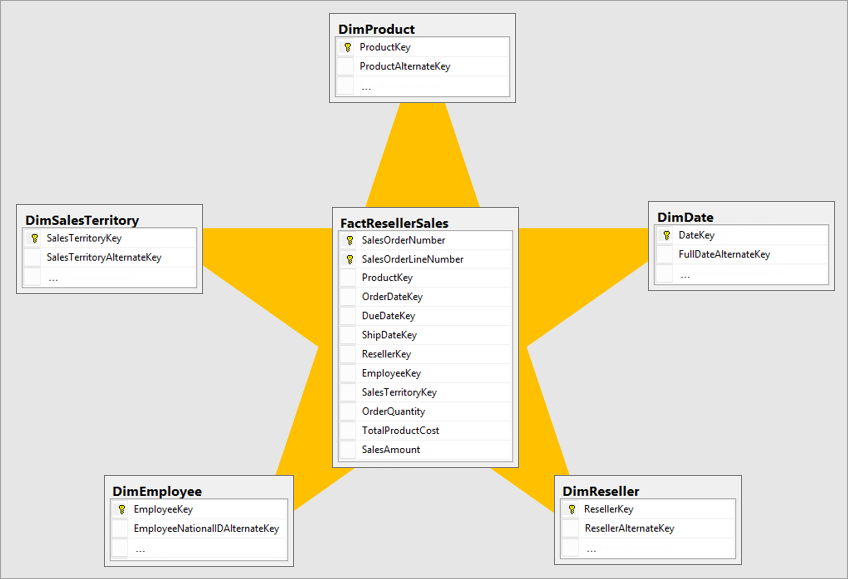

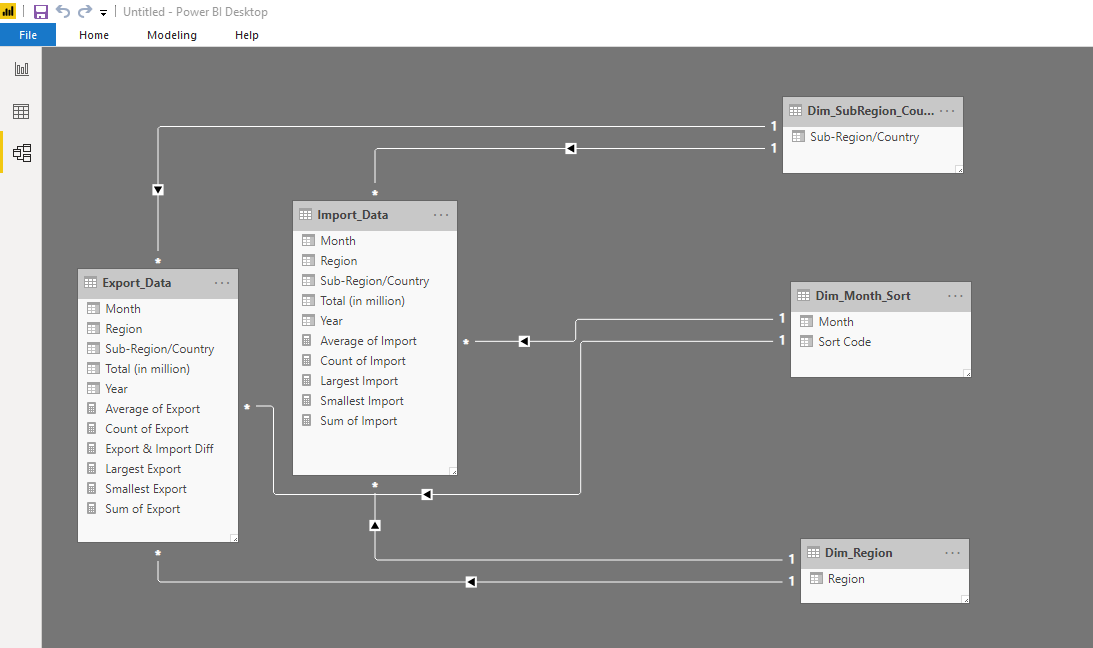

Data copied is partitioned by time slice at a 1 hour granularity. Normalized OLTP data is cloned to Azure Blob storage every hour.The following steps are performed as outlined in the chart above: To demonstrate this, we deploy both a batch pipeline to showcase initial bulk data load and an incremental pipeline to instrument change data capture for incoming data slices. Importantly, we also show how this entire architecture can be orchestrated and monitored through Azure Data Factory. We then show how this data can be visualized on tools such as PowerBI. This processed data is then moved into Azure SQL Data Warehouse that acts as data mart for reporting and analysis. We then transform the data to generate facts and dimensions using Azure HDInsight's Hive as our processing engine. Azure Blob acts as landing zone to process initially loaded data. Firstly, we extract data from an operational OLTP data source into Azure Blob Storage. Our scenario includes an Extract-Load-and-Transform (ELT) model. Azure Data Factory as our orchestration engine to move, transform, monitor and process data in a scheduled time-slice based manner.Azure HDInsight as a processing layer to transform, sanitize and load raw data into a de-normalized format suitable for analytics.Azure Blob Storage as a Data Lake to store raw data in their native format until needed in a flat architecture.Azure Analysis Services as an analytical engine to drive reporting.Azure SQL Data Warehouse as a Data mart to vend business-line specific data.In this solution, we demonstrate how a hybrid EDW scenario can be implemented on Azure using: Batch Load and Incremental Processing: Covers the details of Hive queries used, tables created and the procedures applied to perform the initial load and ingest incrementals to support change data capture for the dimensions and facts.Visualizing with Power BI: A wallk through on sourcing the OLAP data to visualize a sample Reseller Sales Dashboard using Power BI.Monitoring: Details on monitoring and setting up alarms for your warehousing pipeline.Dataset: Overview of the Adventure Works OLTP dataset: our source OLTP database for this solution.

Data Flow: Describes the datasets created and transforms applied over various services to generate the star-schema model of the source OLTP data.Architecture: A high level description of deployed components, building-blocks and resulting outputs.The following document describes details on the Data Warehousing and Modern BI technical pattern deployed via Cortana Intelligence Solutions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed